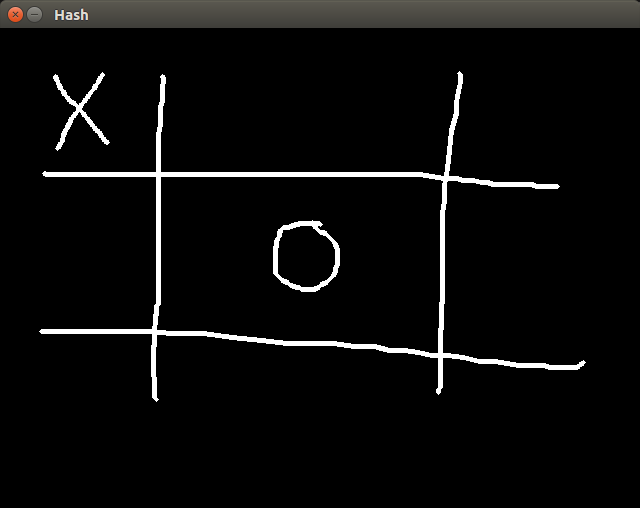

In this tutorial I will show you how to parse a hand-drawn hash game with Computer Vision techniques to determine who’s the winner (if any). See the example below:

For this tutorial we are going to use OpenCV 3.2.

Basically, in order to perform what we intend, we need first to detect where are the ‘x’ and the ‘o’, and later check if they are aligned in such way that indicates a win (aligned on horizontal, vertical or diagonal).

Check the image below:

This is the kind of image we want to deal with. In order to detect each element of that image, we need to segment them. We can do that easily with the OpenCV connectedComponents function. What it does is very simple: For each white pixel of a binary image it associates a label indicating to which group (or connected component) the pixel belongs to. A connected component is a set of white pixels where each pixel is a neighbor of some other white pixel within the same component.

For the image above, it would output three connected components: the hash, the ‘x’ symbol and the ‘o’ symbol. Once we have all them three apart, the detection process becomes much easier.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 | |

Ok! In order to call the connectedComponents function, we need to pass a image of same dimension of the input image. It will store the label associated to each pixel. Then it iterates over each pixel of the label image and saves the position in a map that associates a label to a vector of positions. After that, we want to get bounding rect and a image containing only the pixels of a determined component. For that I’m calling the getComponentImg function. Let’s see how it’s implemented:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 | |

Very straight-forward, since we already have a list containing all the positions of the elements within the connected component. All we need to that is get the min and dimensions of those pixels, then calculate a bounding rect, creating a image of the dimension of the bounding rect, iterate over each position and set it in the image we just created.

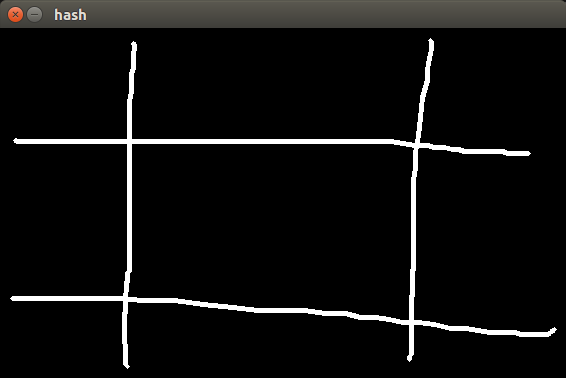

Ok!!! Now we have each element separated, as we can see below:

How can we accomplish the detection now? Well, there are many many ways. I’m going to diver the machine learning path. We will train a neural network with many examples of ‘x’ and ‘o’, in such way that the next time the user draw any of them, the classifier will know which of them the user drew. Once we know that, we just need to check the alignment and ta-dah! Very simple.

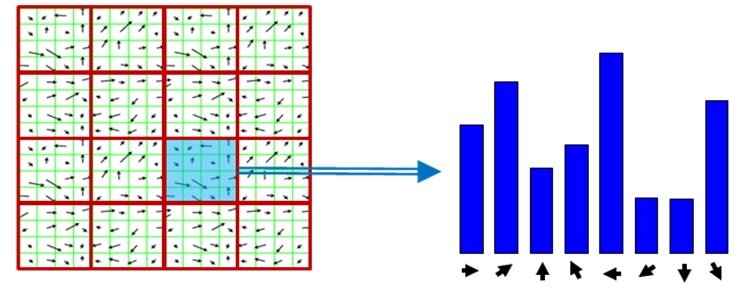

Obviously, we can’t train with the raw images (because their dimension vary. we could draw at a time a big ‘x’ and then a small ‘x’. we could resize, we then we were performing distortions). We need a feature descriptor. In this example, I’m going to user a Histogram of oriented gradients, because it makes the most sense, since the ‘x’ symbol have a very different gradient than a ‘o’.

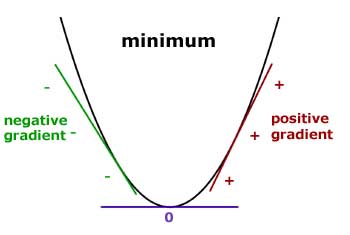

Ok, ok, calm down!!! What is a gradient you are talking about, exactly?

In Calculus, gradient is the rate of change of a function at a given point. Just see image below:

This represent the gradient of the f(x) = x2 function. Obviously, since we are squaring ‘x’, higher values of ‘x’ outputs much higher values of f(x) than lower values of ‘x’. Thus, the gradient on the left side is negative (since it is the direction which f(x) changes most) and has a increasing values as ‘x’ increase. The same can be said about the right side, but with oposite direction.

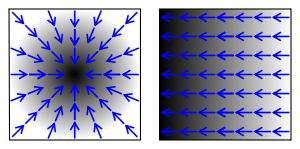

But what does it have to do with images? Can we calculate gradients of images? Of course yes!!! Images are nothing else than 2D discrete functions.

Since we can’t know exactly the f(x) for a image, we need to calculate an approximation for the gradient, calculating the difference between two neighbors pixels for each dimension. In fact, this is exactly what the Sobel filter does.

In possession of both gradients on ‘x’ and ‘y’ direction, we can calculate the angle to which the gradient is pointing to by taking the inverse tangent of x and y. For the ‘x’ symbol, the gradient will point alongside the edges (thus only two directions) while for the ‘o’ symbol, the gradient will tangent each pixel, and since it’s a ellipse, we are going to have gradients pointing to many directions. That way we can distinguish a symbol from another.

Enough talking!! Let’s see the code:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 | |

Set a variable named HISTOGRAM_SIZE to determine the number of bins of the histogram (lower values are better, due to the curse of dimensionality thing).

Training the classifier is pretty straight-forward also, once we have the histograms of each sample:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 | |

NUM_DRAWINGS is the number of samples for each class. The samples are stored in a 3D array named gFeatures. Pay special attention to the layerSizes variables. It’s the variable which determines the number of neurons for each layer. It has a profound impact in the performance of a neural network.

Finally, we just need to get the label associated to each component, group them and check their alignment.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 | |

In the final code I included many more details, such as asking the user to draw some examples of ‘x’ and ‘o’ so the neural network have samples to train on. See it below:

FINAL CODE

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 | |